Real-Time Feedback in Live Betting Models

Live betting has transformed from intuition-based decisions to precise, data-driven strategies powered by real-time feedback systems. These systems analyze in-play data - like ball possession, shot quality (xG), and momentum shifts - within milliseconds to update odds dynamically. Here’s the key takeaway:

- What It Does: Processes thousands of data points (e.g., passes, fouls, shots) in under 200 milliseconds to continuously adjust win probabilities and odds.

- How It Works: Combines Bayesian updating, Markov Chains, and live event data from providers like Sportradar and Opta.

- Why It Matters: Ensures odds reflect the live game, spotting opportunities like mismatched spreads or undervalued markets.

- Market Impact: The sports analytics market, valued at $854.5M in 2023, is projected to reach $4.74B by 2030, driven by demand for advanced tools.

Platforms like WagerProof make these insights accessible to bettors by offering real-time outlier detection, multi-model analysis, and tools like WagerBot Chat for live, actionable advice.

This article explores how real-time feedback loops function, the data sources they rely on, and how tools like WagerProof empower smarter betting decisions.

Building a WINNING Model & The Future Of Sports Betting | 90 Degrees Ep. #33 Powered by Pinnacle

How Real-Time Feedback Loops Work

How Real-Time Betting Feedback Loops Work: Data Processing to Odds Adjustment

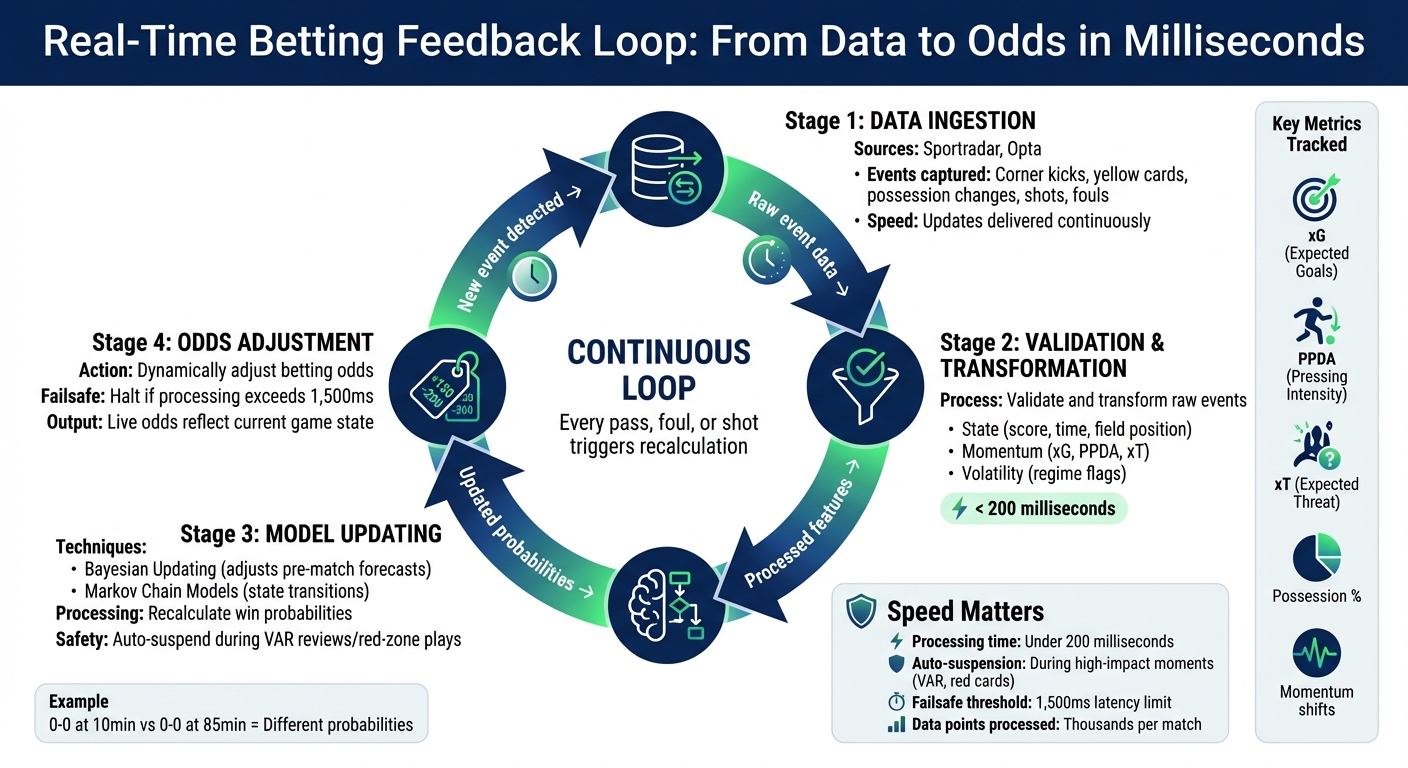

Live betting feedback loops process new match data on the fly, updating models and adjusting odds in real time. Unlike pre-match models, which run just once, live systems treat every event - like a pass, foul, or shot - as a signal to recalculate probabilities continuously.

Core Elements of Feedback Loops

The process kicks off with data ingestion. Official providers such as Sportradar or Opta deliver live updates, including events like corner kicks, yellow cards, or possession changes. These raw events are validated and then transformed into key features: state, momentum, and volatility.

Once validated, the system uses these features to refine predictions. Two main modeling techniques come into play: Bayesian updating, which adjusts pre-match forecasts with each new event, and Markov Chain models, which track probabilistic state transitions.

To maintain fairness, modern systems automatically suspend markets during high-impact moments - like VAR reviews or red-zone plays - to prevent bets on nearly certain outcomes while recalculations take place. Additionally, if data processing exceeds 1,500 milliseconds during volatile moments, the system halts bets to avoid acting on outdated information.

Benefits of Real-Time Feedback

Real-time feedback ensures betting models stay in sync with the live game, rather than relying on outdated snapshots. For example, when a star player gets injured or a team shifts tactics, the model detects the change through metrics like PPDA (Pressing Intensity) and Expected Threat (xT).

This constant recalibration prevents outdated predictions. A 0–0 scoreline at the 10th minute implies vastly different win probabilities compared to the same score at the 85th minute. Feedback loops adjust odds dynamically, factoring in game clock, possession trends, and momentum shifts. They also identify hidden opportunities - like spotting a mismatch between Expected Threat buildup and shot conversion rates, which could signal an underpriced "next goal" market before others catch on.

These mechanisms not only boost accuracy but also power tools like WagerProof’s real-time analysis. By leveraging feedback principles, WagerProof’s features, such as automated outlier detection and WagerBot Chat, provide bettors with smarter, data-driven insights across multiple models and prediction markets.

Data Sources for Live Betting Feedback

Live betting models rely on three key data streams: live odds, user bets, and in-play events. These inputs fuel the live recalibration process, ensuring that models stay aligned with the dynamic conditions of a game. Live odds feeds track where sharp money is moving, user betting patterns highlight public sentiment and demand surges, and in-play event data captures real-time game actions. Together, they enable models to adjust probabilities in milliseconds and identify value opportunities before the broader market reacts.

Live Odds and Market Signals

Modern systems aggregate odds from hundreds of bookmakers simultaneously, prioritizing data from professional operators like Pinnacle and Singbet, which attract sharp bettors. By combining these feeds, models create a consensus price - a more reliable baseline than relying on a single sportsbook’s line. These odds are then converted into probabilities to refine the consensus price.

Platforms deliver odds updates within 200 milliseconds using WebSocket streaming, ensuring that bets reflect the most current market conditions. As Daniel Ludlum from West London Sport explains:

A delay of even a few milliseconds can result in outdated odds. Those tiny gaps become opportunities for sharp users to exploit.

This shift to WebSocket streaming replaces slower REST API polling, which can lag by 1–5 seconds, providing sub-second updates that are crucial in live betting.

WagerProof’s automated tools use these live odds feeds to detect spread mismatches across sportsbooks and prediction markets. When consensus pricing diverges from individual book lines, the system flags potential value bets, giving users an edge similar to what professional bettors seek.

User Betting Patterns

Monitoring public betting behavior reveals more than just sentiment - it uncovers market demand and highlights overreactions to specific events. Sportsbooks analyze bet volumes, stake sizes, and activity spikes to adjust margins and manage risk. For bettors, these patterns can signal when a line is shifting or when the public is chasing noise rather than value.

Live betting now generates over 70% of total sports betting revenue for many sportsbooks, with in-play wagers accounting for a similar share in Europe. Machine learning systems analyze user behavior and betting history to identify trends, offering personalized recommendations and time-sensitive in-play offers based on how users respond to live events.

Advanced models also filter out short-term betting spikes. For instance, if a sudden spike follows a dangerous soccer attack but sustained pressure doesn’t follow, the model may delay adjustments for 5–10 seconds to let the market stabilize. This approach prevents overreactions and ensures disciplined execution during chaotic moments. While these financial signals are crucial, in-play event data provides a direct link to the game’s unfolding dynamics.

In-Play Event Data

Unlike market signals or betting trends, live event data reflects the reality of the match as it happens. Providers like Sportradar and Opta supply live feeds of game events - corner kicks, shots, fouls, possession changes, and injuries - that are used as momentum features in real-time models. These feeds enable metrics like expected goals (xG), pressing intensity (PPDA), and the duration of sequences without a scoring attempt.

Critical events, such as red cards or injuries, prompt immediate model updates and can even temporarily suspend markets. Some events act as regime flags, altering not just probabilities but the entire logic and risk structure of a model.

For example, the NFL’s partnership with Genius Sports, extended through 2029, ensures exclusive distribution of official play-by-play data and Next Gen Stats to sportsbooks. Similarly, FIFA has appointed Stats Perform as the global distributor of betting data for select events, providing fast, reliable pipelines for live match updates and player statistics. These exclusive agreements highlight the importance of low-latency, official data in powering real-time betting systems.

Building Models That Use Real-Time Feedback

Creating a live betting model that can adapt to real-time feedback demands a carefully designed system. These models must process streaming data, update probabilities, and validate outcomes - all within milliseconds. The backbone of such systems is an event-driven architecture capable of handling thousands of updates per second without delay or data loss. These principles extend the earlier discussion on real-time feedback into the technical construction of these models.

Data Integration and Processing

Live betting platforms revolve around event-driven architectures, leveraging tools like Apache Kafka or Confluent Cloud to manage the constant influx of data - odds updates, play-by-play events, and user activity. These systems use a "push model", where updates are sent directly to clients via persistent connections like WebSockets, eliminating the need for clients to request data actively. As systems architect Afolabi Ifeoluwa James puts it:

In the betting economy, 7 seconds is a lifetime.

To handle this rapid data flow, the ingestion layer relies on high-performance languages like Go, Rust, or Node.js, which process data from providers such as Sportradar or Genius Sports. Kafka plays a central role, decoupling data ingestion from downstream tasks like recalculating odds or settling bets. Impressively, high-performance setups can serve over 500,000 concurrent users with sub-30ms latency while keeping costs under $150 per month.

Payload optimization is crucial for reducing latency. Platforms often use Protobuf instead of JSON to shrink data packets by 50–70%, dropping typical sizes from 800 bytes to 250 bytes. Kafka configurations like linger.ms=0 ensure instant processing. For state management, in-memory data stores like Redis provide sub-millisecond lookups for current odds and game states. Advanced setups even employ CPU pinning, dedicating processor cores to Kafka brokers and consumers to avoid delays caused by context switching.

Machine Learning for Dynamic Adjustments

Once data is efficiently ingested and processed, machine learning models come into play, translating this stream of information into actionable adjustments. Feature engineering is a key step here. Effective models incorporate state features (e.g., score, time remaining), momentum features (like xG since the last update), and regime flags (e.g., red cards, timeouts) to account for shifts in game dynamics. Recurrent and transformer models are often used to analyze recent events, maintaining a hidden "momentum state". Some systems also use Markov chains to model game states and predict transitions, such as "Corner → Header → Goal".

Calibration is critical to ensure the accuracy of probabilities. Models often use a "discard window" - a brief period (5–10 seconds) following major events like goals or red cards - to allow the market to stabilize and avoid overreactions. Automated safeguards, such as kill switches, stop execution if losses exceed 5× the median or if calibration error doubles the target.

Validation and Continuous Improvement

In live betting, where traditional closing lines don’t exist, model performance is often measured using Live Execution Value (LEV) - the mid-odds three seconds after a bet is placed. This serves as a proxy for whether the model identified value before the market adjusted.

Latency is tracked at every stage, with systems logging timestamps like arrival_timestamp_feed, model_update_timestamp, and bet_dispatch_timestamp to measure market drift risk. Audits use hash-based checks to verify every bet and its corresponding model state. A validation buffer (typically 5–8 seconds) monitors the Kafka stream for material events that could impact bets placed during this window.

Continuous improvement is driven by comparing predictions against actual outcomes. Models monitor calibration errors and adjust feature weights based on the predictive value of different signals, refining their understanding of which events significantly impact probabilities and which are merely noise. This iterative learning process ensures the model stays aligned with the fast-changing dynamics of live games, mirroring the principles of constant recalibration discussed earlier.

Using WagerProof for Real-Time Feedback

Crafting advanced betting models often requires a robust technical setup - something most bettors simply don't have. That's where WagerProof steps in, offering professional-grade, real-time feedback that's easy to access. By leveraging data from prediction markets, sportsbooks, and live game feeds, the platform delivers actionable insights without forcing users to build complex systems themselves. It essentially brings the power of sophisticated modeling to everyday bettors.

Automated Outlier Detection

One standout feature of WagerProof is its automated detection of market inefficiencies. The system constantly monitors for discrepancies between prediction markets and traditional sportsbooks - exactly the kind of gaps professional bettors look for. For example, when in-game events cause spreads to shift, WagerProof identifies mismatches and sends alerts. These signals include recommendations to fade public betting trends when lines appear inflated, or value bet suggestions when odds diverge from statistical models. All of this is powered by live data streams, eliminating the need for manual tracking.

WagerBot Chat for Live Analysis

WagerProof takes automation a step further with WagerBot Chat, an interactive tool designed for live, in-game analysis. Unlike generic AI tools, WagerBot connects directly to professional data sources, ensuring its recommendations are grounded in real statistics. Users can ask specific questions during games, like "Does the weather favor the under?" or "How often does this team come back after trailing at halftime?" WagerBot pulls together current odds, injury reports, weather updates, and predictive models to deliver a comprehensive response. This ensures accurate, data-driven advice that reflects the actual state of the game and market conditions.

Transparency and Education

WagerProof doesn’t just hand out picks - it focuses on educating users about finding value. Each recommendation is accompanied by detailed reasoning, including the data, metrics, and historical patterns behind it. Users can see how real-time events impact probabilities, which signals tend to hold up over time, and when to trust models versus market consensus. To further enhance understanding, the platform features expert picks from its Real Human Editors, complete with full explanations. This combination of transparency and education equips bettors with the tools they need to make informed, confident decisions throughout a live game.

Real-Time Validation and Model Metrics

Keeping a feedback-driven betting model on track requires constant real-time evaluation. Without vigilant monitoring, even the most sophisticated models can falter when market conditions shift unexpectedly or mid-game events disrupt predictions.

Key Performance Metrics

The right metrics can reveal whether your model is actually finding value or just missing the mark. These metrics work hand-in-hand with feedback loop adjustments, offering a way to measure how well your model responds to real-world conditions.

One critical metric is Live Execution Value (LEV), which assesses the mid-odds approximately three seconds after a bet is placed. If LEV remains consistently positive, it may signal issues like latency or poor responsiveness. Another important measure is the Expected Calibration Error (ECE), which checks whether predicted probabilities align with actual outcomes. When ECE exceeds its live target - doubling, for instance - it’s a clear sign that your model’s confidence is out of sync with reality.

For two-way markets, like moneylines, a market symmetry check helps ensure that the combined fair probabilities for both outcomes (e.g., home win and away win) are close to 1.0. If they deviate significantly, it could mean the model isn’t properly accounting for bookmaker margins or has failed to adjust to market changes. Monitoring these metrics continuously helps keep live betting models aligned with the ever-changing dynamics of the game.

Real-Time Drift Detection

As game conditions evolve, models can gradually drift, leading to performance issues. Keeping an eye on rolling performance indicators can help you spot this drift before it causes major losses. For example, session drawdowns exceeding five times the median signal drift are a red flag. Similarly, if ECE doubles its target level, it suggests the model’s predictions have become unreliable.

To minimize damage, automated kill switches can halt execution when drawdowns surpass five times the median loss. Another important indicator is price deviations that exceed 10–12% from market consensus - these often point to stale quotes or data errors rather than genuine betting opportunities. If these issues persist over three consecutive match days, it’s time to revisit model features like tempo and possession normalization to ensure they still reflect current league trends. These drift indicators lay the groundwork for more robust anomaly controls.

Anomaly Suspension Protocols

Once drift is detected, strict protocols are necessary to protect your bankroll from erratic market behavior. In-play events like goals, injuries, or red cards can temporarily throw markets into chaos. To avoid trading on this noise, suspend model activity for 5–10 seconds after such events.

Latency-based failsafes provide additional protection. If your real-time sports data platform lags by more than 1,500 milliseconds during high-volatility moments, the system should automatically abort bet dispatch to prevent decisions based on outdated information. Exposure limits are also crucial - losses during volatile periods should never exceed 6% of your bankroll.

| Protocol Type | Trigger Condition | Action Taken |

|---|---|---|

| Latency Failsafe | Data age >1,500 ms in volatility | Auto-abort bet dispatch |

| Event Discard | Major event (goal, red card) | 5–10s suspension of trading |

| Kill Switch | Drawdown >5× median loss | Halt all model execution |

| Calibration Guard | ECE >2× target | Suspend for recalibration |

| Exposure Cap | Open in-play loss >6% of bankroll | Pause until settlements free capital |

These protocols act as a safety net, ensuring that even during volatile periods, your model operates within controlled boundaries.

Micro-Betting and Minute-Based Feedback

Micro-betting takes live betting to a whole new level by focusing on specific, quick moments in a game - like the next pitch in baseball, the result of the next basketball possession, or even the outcome of a single tennis serve. Instead of waiting hours for results, these bets are resolved in seconds or minutes. From 2022 to 2024, micro-betting saw explosive growth, with its handle for tennis and soccer increasing by 214%. Today, it accounts for 38% of all in-play wagers. This rapid-fire style of betting not only creates countless opportunities but also demands cutting-edge strategies to keep up.

Opportunities in Micro-Betting

What makes micro-betting so appealing? Its fast pace and sheer volume of opportunities. For instance, a single NFL game can generate more than 100 micro-betting options, while a baseball game offers over 300 pitch-by-pitch markets. During NFL games, platforms handle around 12,000 micro-bets every minute, with 68% of users falling in the 21–34 age group. This younger demographic thrives on speed, making real-time updates absolutely essential.

To meet these demands, advanced modeling techniques like Bayesian updating are key to modern sports betting strategies. This approach starts with pre-match data as a baseline and refines win probabilities after each event - whether it’s a shot, foul, or possession change. Models that can process data feeds every 0.3–0.5 seconds ensure odds are updated instantly, reflecting the game’s momentum. Bettors typically have just 8–12 seconds to place their bets before odds shift or markets close. This means platforms capable of processing and delivering feedback at lightning speed have a clear competitive edge. But with these opportunities come equally significant challenges.

Challenges of Granular Feedback

The fast pace and high volume of micro-betting create serious technical challenges. Systems need single-digit millisecond latencies to keep up - delays of even 100–200 milliseconds can result in missed bets. As Bettoblock puts it:

The difference between winning and missing a bet can come down to milliseconds and if your systems can't keep up, users won't either.

Platforms must manage hundreds of live betting lines that update every few seconds, while also accounting for risk across interconnected markets. For example, a quarterback’s completion rate directly affects receiver yardage props. A $100 limit spread across 50 related micro-props could expose a platform to $5,000 in risk for a single outcome. Yoav Ziv of LSports highlights the stakes:

A two-second delay in line adjustments can cost thousands when sharp money hits across multiple correlated positions.

To navigate these challenges, platforms must implement ultra-fast event-driven processing and strict latency controls. Safeguards like aborting bets when data is older than 1,500 milliseconds are critical. Additionally, suspending markets during high-impact moments - like penalties or VAR reviews - helps minimize losses when outcomes become almost certain. Solving these hurdles is crucial for fully unlocking the potential of micro-betting.

Implementation Challenges and Best Practices

To make real-time feedback in live betting work effectively, systems need to tackle issues like scalability, data accuracy, and fault tolerance.

Scalability and Latency

Traditional databases like MySQL or PostgreSQL paired with standard REST APIs simply can't keep up with the speed required. They cap out at around 800 updates per second, but major events demand over 10,000 updates per second. For instance, during the final minutes of a close NBA game, API calls can surge by as much as 400%. As Rendy, a Guide Author at Freerdps, explains:

In the crazy world of real-time betting, that tiny delay is an arbitrage vulnerability waiting to be exploited.

The key to managing this is adopting an event-driven architecture. By switching from JSON to Protobuf, payload sizes shrink by 50–70%. Meanwhile, combining Apache Kafka with Redis allows for handling over 40,000 updates per second with latency under 15ms. This setup can support more than 300,000 concurrent users on a single high-performance VPS, compared to just 5,000 users on a traditional MySQL system. Fine-tuning hardware, such as using CPU pinning and setting vm.swappiness=0, can cut latency spikes from as high as 800ms down to just 20ms. Competitive platforms aim for an end-to-end latency of under 300ms from the moment an event happens to when it appears on screen. Once scalability challenges are addressed, the focus shifts to ensuring data accuracy.

Ensuring Data Quality

Reliability starts with using multiple data sources - official league feeds, data-driven sports betting analytics from various sportsbooks, and play-by-play data. Cross-referencing these sources helps detect inconsistencies, while automated validation rules flag impossible scenarios, like scores decreasing or game clocks running backward . To protect the platform and bettors during uncertain moments, market suspension protocols act as automated "stop buttons" during events like VAR reviews or penalties when data reliability dips. With data quality secured, the next step is to ensure the system can handle disruptions without breaking down.

Fault-Tolerant Systems

Building a fault-tolerant system involves several strategies. Using immutable data structures and assigning dedicated Kafka partitions for each Match ID helps isolate and manage processing issues. Backpressure strategies, like pausing new connections when consumer lag exceeds 500ms, prevent system breakdowns during peak loads . Data redundancy, achieved by sourcing information from multiple providers, ensures continuity even if one feed fails. To prepare for high-stress scenarios, regular chaos testing simulates events (like goals) and scales up to 1 million concurrent users to identify bottlenecks before they impact live operations. As Rendy notes:

The Zero-Lag Architecture isn't theory - it's running in production right now making real money for real operators.

| Feature | Traditional RDBMS | Event-Driven (Kafka + Redis) |

|---|---|---|

| Latency | 800–2,000ms | 30–80ms |

| Updates per Second | ~800 | 40,000+ |

| Concurrent Users (per VPS) | ~5,000 | 300,000+ |

| Fault Tolerance | Synchronous (High lock risk) | Asynchronous (Decoupled/Resilient) |

Conclusion and Key Takeaways

Real-time feedback has transformed reactive betting into a more precise, data-driven strategy. But it’s not just about speed - it's about creating models that focus on calibration rather than raw accuracy. In other words, the goal is to ensure that predicted probabilities closely match actual outcomes, which is key to making informed decisions.

Using a multi-model consensus approach is another game-changer. By combining predictions from various models, you can reduce biases and errors that often plague single-algorithm strategies. This approach leads to more reliable forecasts, especially in dynamic and unpredictable game scenarios. To make this practical, disciplined risk management is crucial. Automated safeguards and fractional staking strategies are effective ways to handle the volatility of in-play betting. In European markets, where live betting accounts for over 70% of all wagers, these measures are not just helpful - they’re essential.

Platforms like WagerProof showcase how real-time feedback can be integrated into betting strategies. Its Edge Finder identifies mispriced lines using spread-diff analysis and z-scores, while the AI Game Simulator runs thousands of scenarios to calculate accurate win probabilities. With WagerBot Chat, you gain access to live professional data for real-time insights on factors like weather, injuries, or momentum shifts - without the risk of fabricated information. Additionally, the platform's Model Aggregator combines data from over 50 predictive models, creating a standardized consensus that minimizes individual model bias.

These tools and strategies demonstrate how advanced models and real-time insights can elevate betting strategies to a whole new level.

FAQs

What is a real-time feedback loop in live betting?

A real-time feedback loop in live betting is all about keeping up with the action as it unfolds. Live game data - like events on the field or changes in odds - is processed instantly. This constant flow of updated information allows bettors to adjust their predictions and strategies on the fly, helping them make quicker, more informed decisions as the game evolves.

How fast do live models need to update to stay accurate?

Live models need to update in real time - ideally within milliseconds or just a few seconds. This allows them to mirror ongoing game events accurately. By doing so, decisions are always grounded in the freshest data, keeping predictions synchronized with the live action as it unfolds.

What should a live betting model do after a goal, injury, or red card?

A live betting model needs to adjust probabilities instantly to match the current game state. Changes in the score, player conditions, or momentum can shift the dynamics significantly, and the model should account for these factors in real time. This quick recalibration ensures the odds stay accurate and may reveal new opportunities for value bets. Techniques like Bayesian updates or incremental adjustments help incorporate fresh game data and market trends seamlessly.

Related Blog Posts

Ready to bet smarter?

WagerProof uses real data and advanced analytics to help you make informed betting decisions. Get access to professional-grade predictions for NFL, College Football, and more.

Get Started Free