When to Retrain Betting Models for Better ROI

Your betting model won’t stay effective forever. Sports constantly change - rosters, coaching methods, and even league rules evolve. That means the patterns your model relies on will drift over time, leading to poor predictions and shrinking profits. This is called model drift, and ignoring it can crush your ROI.

Here’s what you need to know:

- Key Warning Signs: Declining ROI, misaligned probabilities, and higher Rank Probability Scores (RPS) signal it’s time to retrain.

- Retraining Frequency: Stable sports like the NFL may need updates quarterly, while volatile markets like horse racing demand monthly or even weekly adjustments.

- Methods That Work: Techniques like walk-forward optimization and ensemble models help keep predictions sharp.

- Validation Is Critical: Test updates using small stakes and track metrics like Closing Line Value (CLV) to ensure your model maintains an edge.

Keeping your model updated isn’t optional - it’s the only way to stay profitable in an ever-changing betting landscape.

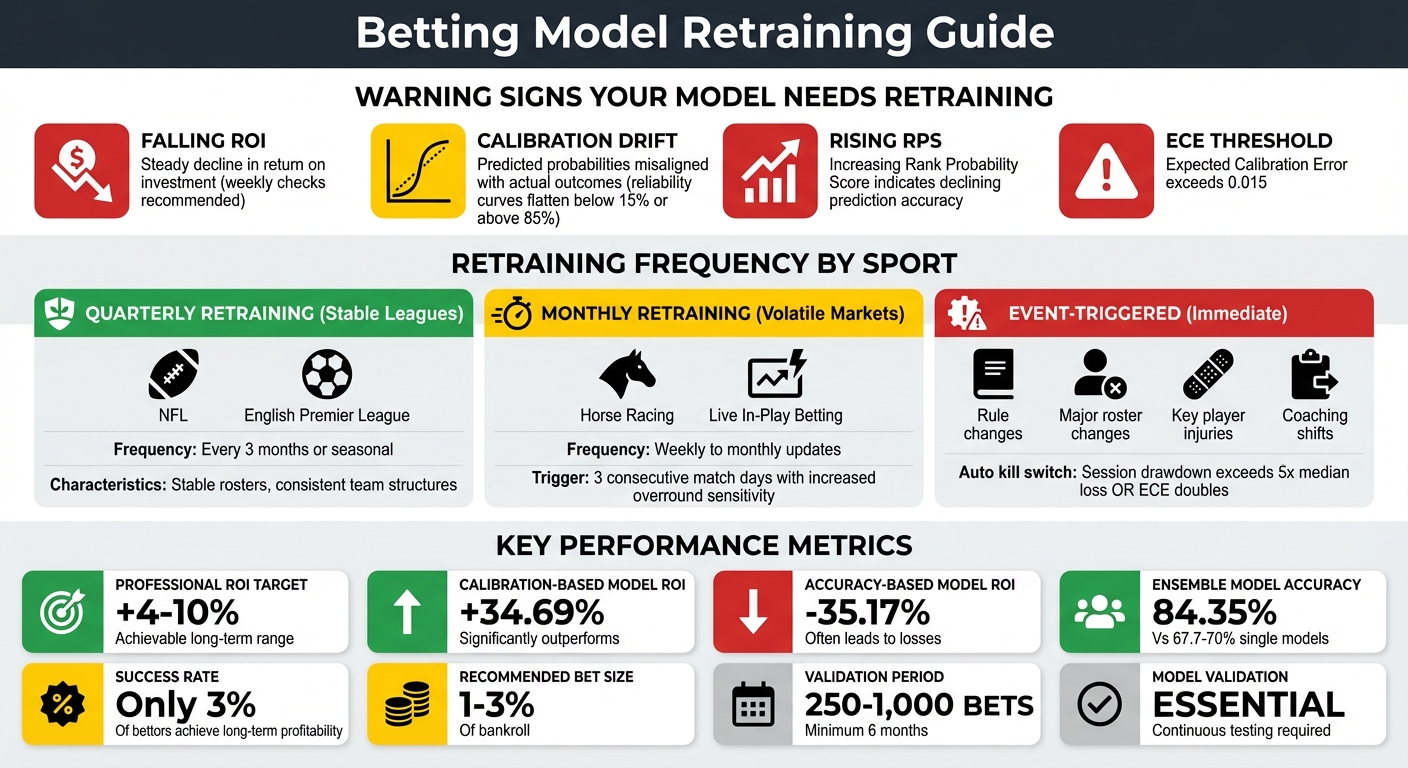

Betting Model Retraining Schedule by Sport Type and Warning Signs

Signs Your Betting Model Needs Retraining

Keeping an eye on key performance metrics is crucial when managing a betting model. If you notice declining ROI, calibration drift, or rising Rank Probability Scores (RPS), it might be time to retrain. Catching these issues early can help you address them before they harm your profits.

Falling ROI and Profits

A steady drop in ROI is a red flag that your model's effectiveness is slipping. Regular weekly checks can help you spot these declines early, giving you a chance to take corrective action.

Calibration and Accuracy Problems

Calibration drift happens when your model's predicted probabilities no longer align with real-world outcomes. For instance, if high predicted success rates aren't translating into actual wins, your model's reliability is compromised. This misalignment can throw off strategies like the Kelly Criterion, leading to poor bet sizing. Tools like reliability curves can pinpoint when predictions in extreme ranges (e.g., below 15% or above 85%) start to flatten out, signaling underfitting and the need for updated data.

Rising Rank Probability Score (RPS)

A climbing RPS indicates your model is losing its ability to distinguish between profitable and unprofitable bets. Using rolling window validation - such as analyzing data from the last three seasons - can help uncover these shifts before they significantly impact your ROI.

Spotting these warning signs early ensures you can retrain your model in time to maintain your betting edge.

How Often to Retrain Models by Sport Type

Identifying model drift early is crucial. Here's a breakdown of how frequently models should be retrained, depending on the specific dynamics of each sport.

Retraining schedules depend on factors like league stability, roster changes, and market conditions. Understanding these nuances helps you balance timely updates with avoiding unnecessary retraining. Below are tailored recommendations for different sports environments.

Quarterly Retraining for Stable Leagues

Sports leagues such as the NFL and English Premier League tend to have stable team structures and rosters during the season. For these, quarterly or seasonal retraining is usually sufficient. A full retraining at the start of the season is ideal, with quarterly reviews to address gradual changes.

Monthly Retraining for Volatile Markets

In fast-changing environments like horse racing or live in-play betting, where conditions shift rapidly, more frequent retraining is necessary. Weekly or monthly updates are recommended. If the Expected Calibration Error (ECE) surpasses 0.015, retraining should be initiated. In live betting, pay attention to three consecutive match days showing increased overround sensitivity - this signals the need for an update.

Event-Triggered Retraining

Significant changes in data or concepts - like rule adjustments, major roster changes, injuries to key players, or coaching shifts - demand immediate retraining. In live betting scenarios, consider using an automatic kill switch if session drawdown exceeds five times the median loss or if the ECE doubles. These measures ensure your models stay responsive to sudden shifts.

Methods for Retraining Betting Models

When it’s time to retrain your betting models, the method you choose can make all the difference. The right approach ensures your model adapts to new trends while steering clear of pitfalls like overfitting.

Walk-Forward Optimization

Walk-forward optimization involves training your model on a set historical period - usually about two years - and then testing it on the following year to mimic actual betting conditions. This technique helps tackle issues like model drift and shifting data patterns, which are common in sports betting.

To avoid data leakage, use lagged variables so your predictions only rely on pre-bet data. For instance, in June 2025, developer throwawayhub25 tested an NBA moneyline model using an 80/20 train-test split on around 3,550 games. The model used logistic regression for calibration, ensuring predicted probabilities matched real-world outcomes, and achieved a steady 10% ROI over 1,500 backtested bets.

"Sports bettors who wish to increase profits should therefore select their predictive model based on calibration, rather than accuracy."

– Machine Learning with Applications, Volume 16

Calibration, not raw accuracy, should guide your retraining process. Research on NBA betting models found that models chosen for calibration achieved a +34.69% ROI, while those selected for accuracy suffered a –35.17% loss. During walk-forward testing, reliability curves can confirm whether a 70% win probability translates into wins 70% of the time. This approach helps maintain consistent ROI, even as sports data evolves.

Next, updating models with ensemble techniques can further refine predictions.

Ensemble Model Updates

Using ensemble methods - combining models like random forest and CatBoost - can significantly enhance prediction reliability, especially when adding more data to a single model no longer improves outcomes. This is particularly useful against sharp bookmakers who adapt to static systems.

While single NBA models typically achieve 67.7%–70% accuracy, ensembles can push that figure to about 84.35%, reducing errors by roughly 12%. More importantly, average ROI shifts dramatically: single models often show a –35.17% ROI, while accuracy-based ensembles deliver +5.56%, and calibration-based ensembles hit +34.69%.

Different aggregation techniques offer unique benefits. Weighted averaging prioritizes models with strong past performance, median aggregation filters out extreme predictions, and stacking - where a meta-model combines predictions - can optimize results. However, stacking requires careful validation to avoid overfitting. These strategies help maintain profitability even as market dynamics evolve.

Finally, incorporating adaptive bet sizing strategies can fine-tune your wagering approach.

Adaptive Kelly Criterion Integration

Incorporating an adaptive Kelly Criterion refines bet sizing by adjusting to your model's updated edge. However, this method only works with well-calibrated models - miscalibrated probabilities can quickly drain your bankroll.

"Kelly betting only works with a well-calibrated model."

– Machine Learning with Applications, Volume 16

Between April 17 and May 18, 2021, developer Kevin Tomas used a football model trained on data since 2015 to achieve impressive results. Over 132 bets, the strategy turned $1,542.40 into a $250.71 profit, yielding a 16.25% ROI. This model analyzed over 100,000 historical games using the Footy-Stats API.

When applying adaptive Kelly, keep individual bets between 1–3% of your bankroll to manage variance. Pair this with smart line shopping - taking advantage of price differences across sportsbooks - to boost your implied probability edge by 2–5%. While professional bettors aim for a steady ROI of 4% to 10%, only about 3% of sports bettors achieve consistent long-term success. This strategy maximizes ROI while safeguarding your bankroll against potential calibration errors.

Testing Model Performance After Retraining

Once you've retrained your model to address drift and calibration problems, testing becomes absolutely critical. Skipping this step could lead to costly mistakes. The goal here is to ensure your model performs well under real-world conditions before putting significant resources on the line.

Live Testing with Small Stakes

The safest way to test your retrained model is by starting with small stakes. This allows you to observe how it behaves in actual market conditions without risking your entire bankroll. Before going live, test the model on a set of recent, unseen data using a rolling-window approach to confirm its calibration holds up over time.

Set up automated alerts for any breaches in key metrics. For instance, if your Expected Calibration Error (ECE) exceeds 0.015, you'll immediately know that the model's probabilities are starting to drift. Additionally, conduct weekly drift tests using tools like the Kolmogorov-Smirnov test to compare your model's performance against its baseline and catch any early signs of performance degradation.

Once you've confirmed the model's reliability under small stakes, you can begin to assess whether its edge holds up in real-world scenarios.

Edge Persistence Checks

After initial live testing, the next step is to determine if your model consistently maintains its edge. A reliable way to measure this is by using Closing Line Value (CLV). This metric compares your model's predicted odds to the market's closing lines - or Betfair Starting Price (BSP) in exchange markets. If your model regularly uncovers value that disappears by the closing line, it’s a strong indicator of a persistent edge.

CLV is particularly useful because it serves as a leading indicator, validating your model’s edge even before outcomes are settled. To enhance this analysis:

- Log key metadata for every bet, such as timestamps, league, market type, odds, probabilities, and edge.

- Use closing odds from sharp bookmakers or a trimmed mean of the top books as a proxy for the true market price.

- Break down performance by league, market type, and time-to-start to identify where your model excels or struggles.

Be cautious of lookahead bias - never validate your model with future odds data that wouldn’t have been available at the time of placing the bet.

Long-Term ROI Validation

To confirm sustained improvements after retraining, track your model’s performance over rolling windows of 250 to 1,000 bets. This approach helps you identify trends and potential regime shifts over time. A minimum tracking period of six months is recommended to filter out short-term variance and ensure your model's adjustments are genuinely effective. Perform monthly calibration audits and save snapshots to monitor for drift.

If your ROI shows positive results but CLV hovers near zero in smaller samples, this could indicate variance rather than a true edge. In such cases, consider reducing stake sizes until you see consistent improvement in CLV. Breaking down metrics by league and market type can also clarify where your model is truly excelling. This segmented analysis will help you determine whether the retraining has improved overall performance or only in specific areas.

Using WagerProof for Model Retraining

When it's time to retrain your betting model, having the right tools can make all the difference. WagerProof provides a comprehensive system to simplify this process, ensuring your model stays accurate and competitive. Retraining requires reliable data and the ability to spot when your model is losing its edge. WagerProof offers real-time performance tracking and tools to validate your updates post-retraining.

Here’s how WagerProof helps keep your model sharp and effective.

Edge Finder for Real-Time Alerts

The Edge Finder tool in WagerProof is designed to flag prediction market mismatches and value bet opportunities as they arise. It’s like having an early warning system for market changes. If your model starts overlooking edges that Edge Finder detects, it’s a clear sign that retraining might be overdue. This tool compares odds across multiple platforms and highlights discrepancies in real time, giving you immediate insight into market inefficiencies. With this kind of monitoring, you can address model drift before it starts cutting into your ROI, rather than discovering issues long after they’ve caused damage.

Testing Models with WagerBot Chat

Before deploying updates, WagerBot Chat lets you simulate scenarios and test your model’s logic. Unlike generic AI tools that might rely on outdated or inaccurate data, WagerBot pulls from live, professional-grade data sources to ensure precision. You can test specific game conditions, compare probability outputs with current market lines, and identify any calibration issues. This controlled testing environment allows you to stress-test your retrained model, ensuring that adjustments align with your goals and the strategies discussed earlier.

Historical Data for Model Updates

WagerProof also provides access to detailed historical data, which is invaluable for detecting drift and refining your model. By comparing predicted probabilities with historical baselines and realized CLV, you can identify where your model might be falling short. During retraining, you can dive into feature importance to determine which variables - such as weather or injuries - are causing errors. Each historical snapshot includes acquisition timestamps in UTC, eliminating the risk of lookahead bias. This level of transparency ensures that your backtests are clean and your predictive accuracy improves with every iteration.

Conclusion

Keeping your betting model sharp requires ongoing attention to performance metrics and market changes. Watch key indicators like ROI, calibration, and rank probability scores to spot early signs of drift. Regularly running drift tests can help you detect shifts in data distribution before they impact your bankroll.

When retraining becomes necessary, proven methods like walk-forward optimization and ensemble updates can help maintain the accuracy of your predictions. Validation is crucial - use rolling windows instead of static testing to better mimic real-time conditions. Incorporating golden datasets, which account for corner cases and long-term trends, ensures the retrained model surpasses the previous version in performance.

Pay close attention to bankroll volatility, as unexpected swings might signal issues with model calibration or stake sizing. Identifying and addressing these problems early can prevent them from escalating.

Tools like WagerProof simplify this entire process. Features such as Edge Finder for real-time alerts, WagerBot Chat for robust testing, and clean historical data with UTC timestamps help you catch drift quickly, validate updates thoroughly, and avoid lookahead bias. These capabilities enable you to make smarter, data-driven decisions, ensuring your betting edge - and ROI - remains strong.

FAQs

How can I tell if poor results are variance or model drift?

To figure out whether poor results are due to variance or model drift, focus on metrics like calibration and performance trends. If the calibration stays consistent but ROI varies, variance is likely the issue. On the other hand, if calibration metrics or stability show a decline over time, this points to model drift. Keeping a close eye on these metrics regularly can help you identify the exact cause of performance problems.

What’s the simplest retraining schedule to start with by sport?

The easiest way to manage retraining is by setting a regular schedule - daily, weekly, or monthly - especially if keeping track of data changes takes up too many resources. For instance, retraining every few weeks or after collecting a substantial amount of new data (like 1,500 bets) tends to be effective. Another clear indicator for retraining is when your model's performance score falls below that of the deployed version. In such cases, sticking to a consistent timeline, such as weekly or monthly retraining, can help maintain accuracy and reliability.

How do I validate a retrained model before increasing bet size?

To ensure a retrained betting model performs effectively, it's crucial to evaluate both its calibration and predictive accuracy. Calibration measures how closely the model's predicted probabilities match actual outcomes. Tools like reliability plots and the Brier Score can help with this assessment.

Another key step is comparing the model’s predicted odds to market closing odds (CLV) to identify any predictive advantage. Additionally, track essential metrics such as ROI, hit rate, and log loss across a substantial sample size.

Only consider increasing your bet size if the model consistently demonstrates profitability and reliability over time.

Related Blog Posts

Ready to bet smarter?

WagerProof uses real data and advanced analytics to help you make informed betting decisions. Get access to professional-grade predictions for NFL, College Football, and more.

Get Started Free